Eine spannende Herausforderung: Wie gelingt es, ein Fahrzeug zu validieren, dessen Steuerung selbstständig Entscheidungen trifft? ZF löst diese Aufgabe mit einem Mix aus klassischer HIL-Technologie und sensorrealistischer Umfeldsimulation. Das dafür konzipierte Testsystem basiert auf der dSPACE Werkzeugkette.

Ein konventionelles, fahrergesteuertes Fahrzeug mit hohem Qualitäts- und Komfortniveau zur Straßenreife zu bringen, ist nur mit erheblichem Aufwand in der Entwicklungs- und Validierungsphase möglich, zumal Kosten- und Zeitvorgaben stets eingehalten werden müssen. Die Einführung autonomer Transportsysteme auf der Straße hebt die Anforderungen an Qualität, Effizienz und Sicherheit auf ein völlig neues Niveau. Die daraus resultierende Komplexität in der Entwicklungsphase kann nur durch den Einsatz besonders schlanker Methoden und Werkzeugketten bewältigt werden. Denn es geht nicht nur darum, autonome Funktionen erfolgreich auf die Straße zu bringen, sondern auch darum, sie unter allen Wetter-, Verkehrs- und Sichtverhältnissen sicher anwenden zu können.

Plattform für autonome Technologie

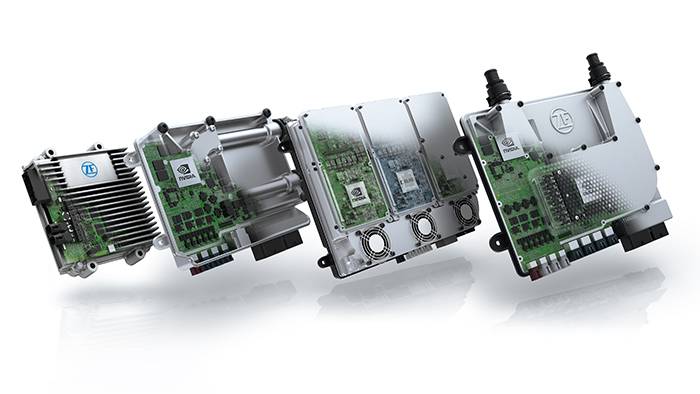

ZF treibt die Entwicklung einer Technologieplattform für einen autonomen, batteriebetriebenen People Mover voran. Diese Leistung unterstreicht die umfassende Kompetenz von ZF als Systemarchitekt für autonomes Fahren. Dazu nutzt der Technologiekonzern sein ausgeklügeltes Netzwerk von Experten – insbesondere zur Ermittlung und Verarbeitung von Umgebungs- und Sensordaten. Das Projekt demonstriert auch die Leistungsfähigkeit und Praxistauglichkeit des Supercomputers ZF ProAI, der erst vor einem Jahr von ZF und NVIDIA eingeführt wurde. Der Computer fungiert als zentrales Steuergerät im Fahrzeug. Ziel ist eine Systemarchitektur, die skalierbar ist und je nach Einsatzzweck, verfügbarer Hardware-Ausstattung und gewünschtem Automatisierungsgrad auf jedes Fahrzeug übertragen werden kann.

Aufbau des autonomen Systems

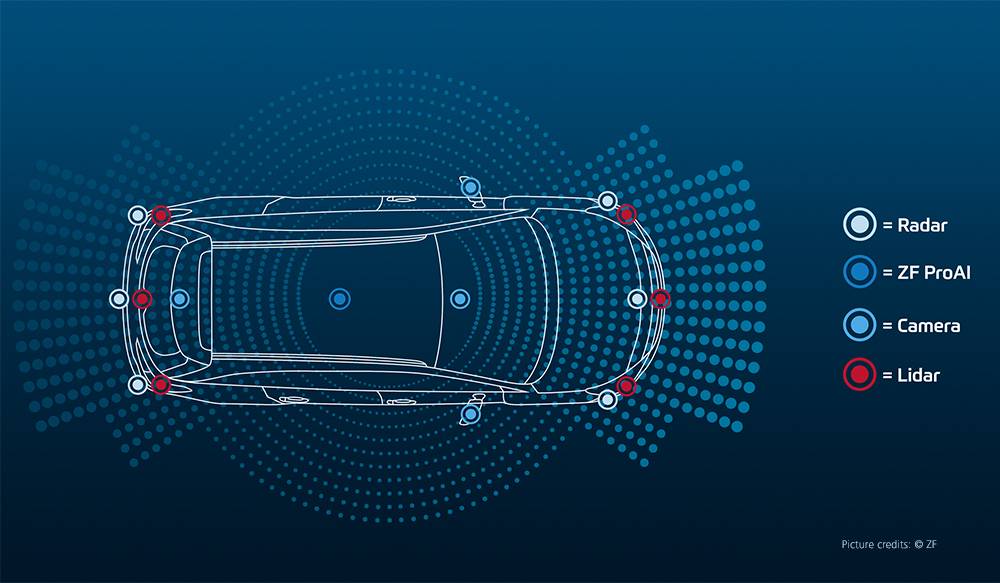

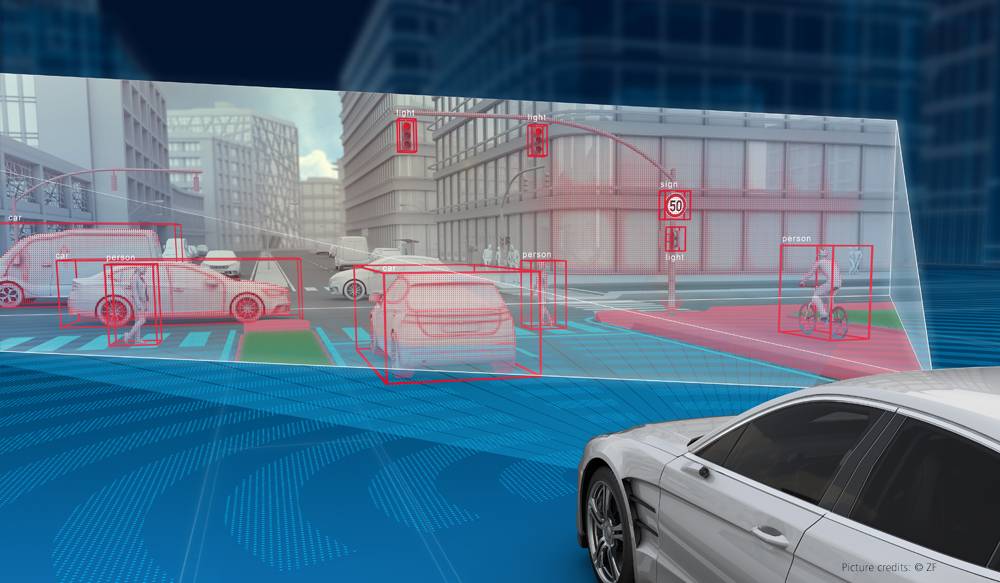

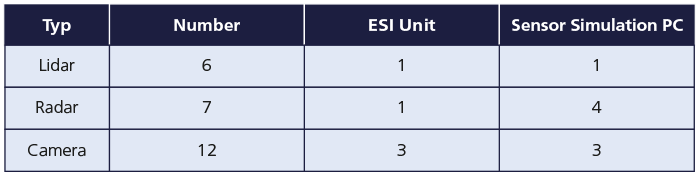

Das Fahrzeug ist mit sechs Lidarsensoren, sieben Radarsensoren und zwölf Kamerasensoren zur Umgebungserfassung ausgestattet. Ein globales Satellitennavigationssystem (GNSS) sorgt dafür, dass der genaue Standort bestimmt wird. Alle Sensordaten werden in dem zentralen Steuergerät ZF ProAI zusammengeführt. Das Steuergerät verarbeitet die Daten vor und wertet sie mit den typischen Schritten der Perzeption, Objektidentifikation und Datenfusion aus. Auch die Fahrstrategie wird berechnet. Daraus abgeleitet werden Steuersignale für die Aktoren (Lenkung, Antriebsstrang und Bremssystem) erzeugt. Algorithmen, die teilweise auf künstlicher Intelligenz (KI) beruhen, analysieren die Sensordaten. Die KI-Software beschleunigt vor allem die Datenanalyse und verbessert die Präzision der Objekterkennung. Ziel ist es, aus der Fülle der Daten wiederkehrende Muster in Verkehrssituationen zu erkennen, zum Beispiel wenn ein Fußgänger die Straße überquert.

Validierungskonzept

Ein wichtiger Validierungsschritt für Steuergeräte (ECUs) ist der Integrationstest, bei dem ein Steuergerät in Kombination mit allen Sensoren, Aktoren und der Elektrik/Elektronik (E/E)-Architektur des Fahrzeugs getestet wird. Diese umfassende Sichtweise ist wichtig, um alle Fahrfunktionen, einschließlich der beteiligten Komponenten (Sensoren, Aktoren), vollständig zu validieren und das Fahrzeugverhalten zu evaluieren. Die Hardware-in-the-Loop (HIL)-Simulation ist eine etablierte Methode für Integrationstests. Daher enthält das Entwicklungsprojekt einen Validierungsschritt, der eine HIL-Testlösung verwendet.

Künstliche Intelligenz

Künstliche Intelligenz (KI) ist ein Teilgebiet der Informatik, das sich mit der Automatisierung von intelligentem Verhalten und maschinellem Lernen befasst. Im Allgemeinen ist künstliche Intelligenz der Versuch, bestimmte menschliche Entscheidungsstrukturen nachzubilden, indem ein Computer so gebaut und programmiert wird, dass er Probleme relativ selbstständig bearbeiten kann.

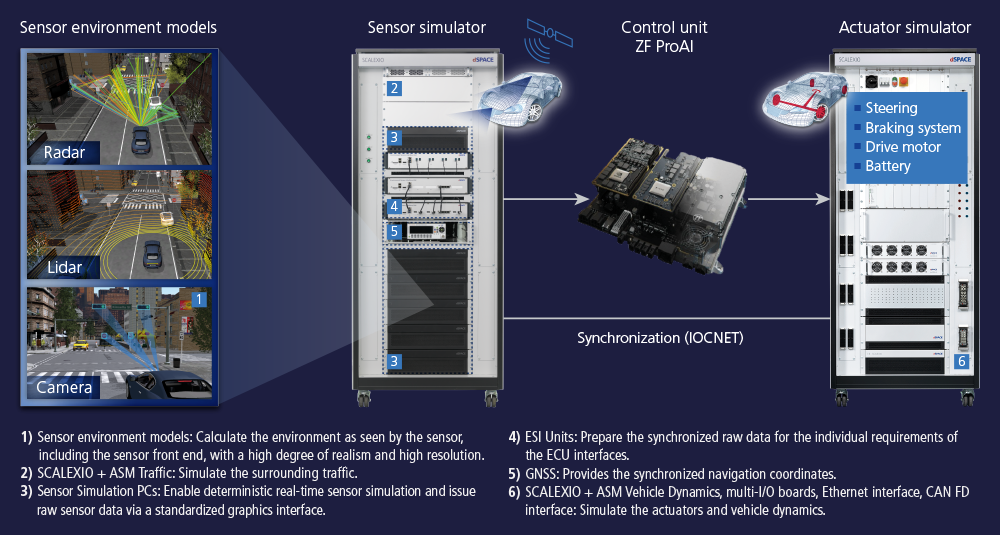

Konzept des HIL-Simulators

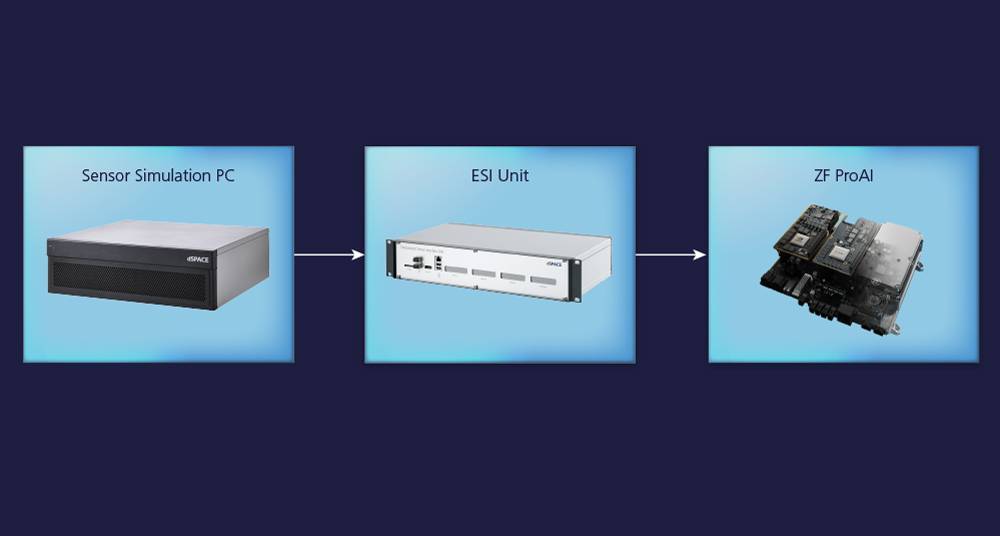

Bei der Entwicklung des Konzepts für den HIL-Simulator arbeitete ZF mit dSPACE zusammen. Der Simulator basiert auf SCALEXIO-Technologie, mit der das gesamte Fahrzeug simuliert werden kann, einschließlich Lenkung, Bremsen, Elektroantrieb, Fahrdynamik und aller Sensoren. Als ECU-Eingang überträgt der Simulator alle Sensorsignale. Auf der Ausgangsseite stellt er eine Restbussimulation sowie die für den HIL-Betrieb der Fahrzeugaktoren erforderlichen I/O zur Verfügung. Um eine realistische Simulation zu gewährleisten, wird die Toolsuite dSPACE Automotive Simulation Model (ASM) zur Berechnung des Fahrzeugs und der Fahrdynamik eingesetzt – für die Sensoren und das Fahrzeug in Echtzeit. Dies stellt eine besondere Herausforderung dar, da KI-Systeme selbst keine „harten“ Echtzeiteigenschaften und linearen Abhängigkeiten aufweisen. Das KI-Steuergerät wird also zwischen die synchronisierten Simulatoren für die Sensoren und Aktoren „eingefügt“ und somit genau wie unter realen Bedingungen im Fahrzeug betrieben.

Sensorsemulation

Das Steuergerät ZF ProAI ist so konzipiert, dass es in erster Linie alle Sensorrohdaten direkt verarbeiten. Darüber hinaus werden die Sensordaten auch als Objektlisten eingelesen. Die Objektlisten werden vom ASM Traffic Model als Teil einer Ground-Truth-Simulation des umgebenden Verkehrs bereitgestellt. Bei den Rohdaten müssen alle Sensoren so realistisch wie möglich nachgebildet werden.

Hochpräzise Simulation der Sensorumgebung

Die Generierung von Sensorrohdaten erfordert Modelle, die die Sensorumgebung basierend auf definierten Testszenarien berechnen und physikalisch genau simulieren. Dazu werden die physikalischen Radar-, Lidar- und Kameramodelle der dSPACE Werkzeugkette eingesetzt. Diese hochpräzisen und hochauflösenden Modelle berechnen den Übertragungsweg zwischen der Umgebung und dem Sensor, einschließlich des Sensor-Front-ends. Der Raytracer der Radar- und Lidarmodelle bildet den gesamten Übertragungsweg vom Sender zur Empfangseinheit ab und unterstützt die Mehrwegeausbreitung. Mehrere Millionen Strahlen werden parallel ausgesandt. Die genaue Anzahl hängt von der jeweiligen 3D-Szene ab. In beiden Modellen werden die Reflexion und Diffusion komplexer Objekte auf der Grundlage des physikalischen Verhaltens berechnet. Es ist auch möglich, die Anzahl der „Sprünge“ für die Mehrwegeausbreitung anzugeben. Das Lidarmodell ist sowohl für Blitz- als auch für Scanning-Sensoren ausgelegt. Das Kameramodell kann verschiedene Objektivtypen und optische Effekte wie chromatische Aberration oder Schmutz auf dem Objektiv berücksichtigen. Da alle Modelle sehr komplex sind, müssen die Modellkomponenten auf Grafikprozessoren (GPUs) berechnet werden, um die Echtzeitanforderungen zu erfüllen. Zu diesem Zweck kommt der mit dem NVIDIA P6000 ausgestattete Sensor Simulation PC zum Einsatz, der sich nahtlos in das dSPACE Echtzeitsystem integriert.

Generieren des Testszenarios

Der wichtigste Teil beim Testen autonomer Fahrzeuge ist die Generierung geeigneter Szenarien, mit denen Funktionen für das autonome Fahren zuverlässig getestet und validiert werden können. Dafür kommt der Scenario Editor zum Einsatz. Dieser kann komplexen Umgebungsverkehr mit komfortablen grafischen Methoden erzeugen. Diese Szenarien bestehen aus den Manövern eines Ego-Fahrzeugs, also des zu testenden Fahrzeugs einschließlich Sensoren, den Manövern des Umgebungsverkehrs und der Infrastruktur (Straßen, Verkehrszeichen, Straßenrandanlagen usw.). So entsteht eine virtuelle, realistische 3D-Welt, die von den Fahrzeugsensoren erfasst wird. Die flexiblen Einstellungen ermöglichen eine Vielzahl von Tests, die von der exakten Umsetzung der standardisierten Euro-NCAP-Spezifikationen bis hin zu individuell strukturierten, komplexen Szenarien in städtischen Gebieten reichen. Die 3D-Umgebung, einschließlich der 3D-Objekte der Fahrzeuge sowie der Sensorumgebungsmodelle, wird mit ASM in Echtzeit simuliert. Die Trajektorien der Verkehrsteilnehmer werden mit dem ASM Traffic Model simuliert.

The two HIL racks contain all components for sensor simulation:

SCALEXIO real-time platform, Sensor Simulation PCs, and ESI Units.

The ZF ProAI control unit to be tested is located in the left-hand rack.

The simulator for the actuators is not shown.

Komponenten der Sensorsimulation

Die Simulation der Echtzeitsensorumgebung wird mit den folgenden Komponenten realisiert:

ZF ProAI

Das Steuergerät ZF ProAI bietet hohe Rechenleistung und künstliche Intelligenz (KI) für Funktionen, die automatisiertes Fahren ermöglichen. Es nutzt eine extrem leistungsstarke und skalierbare NVIDIA-Plattform, um Signale von Kamera-, Lidar-, Radar- und Ultraschallsensoren zu verarbeiten. Es versteht, was in der Umgebung des Fahrzeugs in Echtzeit passiert und sammelt Erfahrungen durch Deep Learning.

Vorteile

- KI-fähig

- Rechenleistung von bis zu 150 TeraOPS (= 150 Billionen Rechenoperationen pro Sekunde), je nach Modell

- Bereit für Funktionen, die automatisiertes und autonomes Fahren ermöglichen

- Hochgradig skalierbare Schnittstellen und Funktionen

Validierung des autonomen Fahrzeugs

Der hier vorgestellte HIL-Simulator-Verbund hilft Entwicklern, das Gesamtfahrzeugverhalten der virtualisierten Technologieplattform unter Bedingungen zu analysieren, die für die weitere Entwicklung grundlegend sind. Dazu gehören Szenarien in Testbereichen, in denen die ersten ausgelieferten Fahrzeuge völlig autonom ihren Weg finden müssen. Der Simulator testet das sichere Fahrverhalten der Fahrzeuge auch bei unvorhersehbaren Ereignissen unter Wetterbedingungen wie Regen, Schnee oder Glatteis. Weitere typische HIL-Testverfahren werden eingesetzt, zum Beispiel das Generieren von Fehlern im E/E-System wie Drahtbruch, Kurzschluss oder Bussystemfehler. Diese umfangreichen, kontinuierlich erweiterten Testkataloge stellen sicher, dass das sicherheitskritische autonome System in Bezug auf die Funktionalität effizient validiert wird.

The HIL simulator synchronously generates the surrounding environment of the radar, lidar, and camera sensors, including their front end, in real time and subsequently provides it to the ZF ProAI control unit. ZF ProAI controls the simulated actuators according to the driving strategy.

Deep Learning

ZF-Ingenieure nutzen den Simulator, um das Fahrzeug in verschiedenen Fahrfunktionen zu trainieren. Das Training konzentriert sich insbesondere auf städtische Verkehrssituationen: zum Beispiel die Interaktion mit Fußgängern und Fußgängergruppen an Zebrastreifen, die Beurteilung von Kollisionen sowie das Verhalten an Ampeln und in Kreisverkehren. Im Gegensatz zum Fahren auf Autobahnen oder Landstraßen ist es in städtischen Gebieten sehr viel schwieriger, ein umfassendes Verständnis der aktuellen Verkehrssituation zu entwickeln, das die Grundlage für angemessene Aktionen eines computergesteuerten Fahrzeugs bildet.

Auf einen Blick

Die Aufgabe

- Validierung einer autonomen, batterieelektrischen Technologieplattform

- Testen der KI-basierten Fahrzeugführung

Die Herausforderung

- Emulation aller Sensoren in Echtzeit

- Aufbau einer sensorrealistischen Echtzeitsimulation der Sensorumgebung (3D-Umgebung)

- Simulation des gesamten Fahrzeugverhaltens im realistischen Verkehr

Die Lösung

- Aufbau einer Echtzeitplattform für die hochpräzise Simulation von Radar-, Lidar- und Kamerasensoren

- Simulation von Verkehr, Fahrzeugdynamik und elektrischen Antrieben in Echtzeit

- Durchführung von Tests mit leicht anpassbaren Szenarien in einer virtuellen 3D-Umgebung

Über den Autor:

Oliver Maschmann

Oliver Maschmann is project manager at ZF in Friedrichshafen and responsible for the setup and operation of the HIL test benches for full vehicle integration testing.