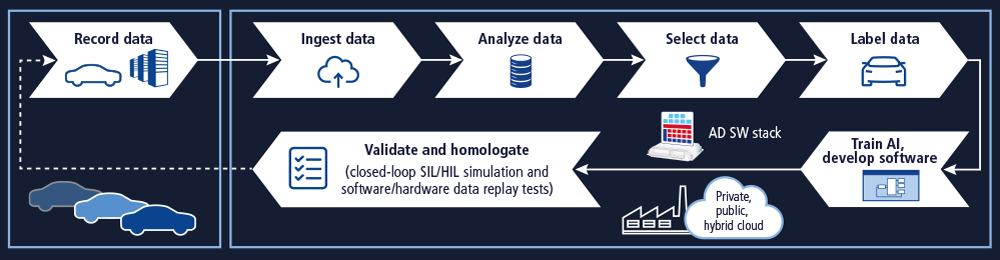

最佳基于数据驱动的开发解决方案包括一条完全集成的管道,用于自动驾驶中基于机器学习功能的持续开发。然而,在开发过程中仍有许多问题有待解决,例如格式和接口的不一致,这都是造成项目延误的常见原因。dSPACE 通过先进的技术方法和出色的专业知识确保成功应对数据管道方面的挑战。

由于现实世界和相应车辆环境有高度的复杂性,因此保证自动驾驶汽车可靠运行是一项十分复杂的任务。传统的问题解决方法(比如采用“假设-推理-反例”的简单逻辑比较)不足以应对这样的任务。前提条件数不胜数。开发人员必须采用不同的方法来解决这些复杂的问题。这种方法借助示范用例实现通用模型的自动参数化,而非通过手动编写代码来解决问题,同时确保最高的准确性和完整性。输入的数据越多(此过程通常称为训练),参数化结果就越接近预期结果,车辆也就越安全。这是一种相对有效的方法,可以让自动驾驶汽车为现实环境中的种种情况做好准备,而不必事先使用代码定义完整的环境,因为这完全不切实际。因此,开发过程被称为数据驱动或以数据为中心,而非以代码为中心。对于开发来说,我们必须根据数据调整流程,而不是通过现有流程发送数据。因此,数据、数据管道和配套工具将成为开发过程的重点。

车辆中的高级数据记录

数据管道的出发点是车辆。它会收集所有数据,然后在此过程中使用这些数据。在车辆层面,数据源指的是所有传感器、总线和网络,它们传输车辆内部传感器和 ECU 的信息。首先,我们需要在车辆和数据收集系统采取大量措施,从而优化数据管道。第一,记录器本身的车载基础设施和架构必须支持从 I/O 接口到数据存储的数据传输,速度要尽可能快,并且不能丢失数据。其次,并不是记录下来的所有数据都是必要的。只有一小部分数据确实很有价值,并且会产生影响。那么,为什么不尽早剔除多余的数据以节省存储空间以及时间呢?或者仅记录和存储有价值的数据,而丢弃多余的数据。这意味着我们需要一种技术实力强大并且高度灵活的数据记录系统,同时还需要将记录与存储相关联的功能,以确保只存储正确的数据。在这方面,用于智能数据记录的 dSPACE 解决方案可以助您一臂之力。它由数据记录硬件和软件、数据摄取和管理软件以及配套服务组成。此解决方案的核心组件是 AUTERA 产品系列,该产品系列专用于具有高要求 ADAS/AD 应用中的数据记录。RTMaps 是一款轻量级但功能强大的开发和记录软件, RTag 则是移动标记应用 。该解决方案可以与两者结合使用。dSPACE 还拥有专业的技术和独家技巧,能够对记录的数据量进行过滤,并精简为相关子集。我们的人工智能专家也将提供专业支持。如果需要,dSPACE 及其合作伙伴还可以为您提供专门的车辆设备和数据采集服务。

数据摄取 – 为开发人员提供数据

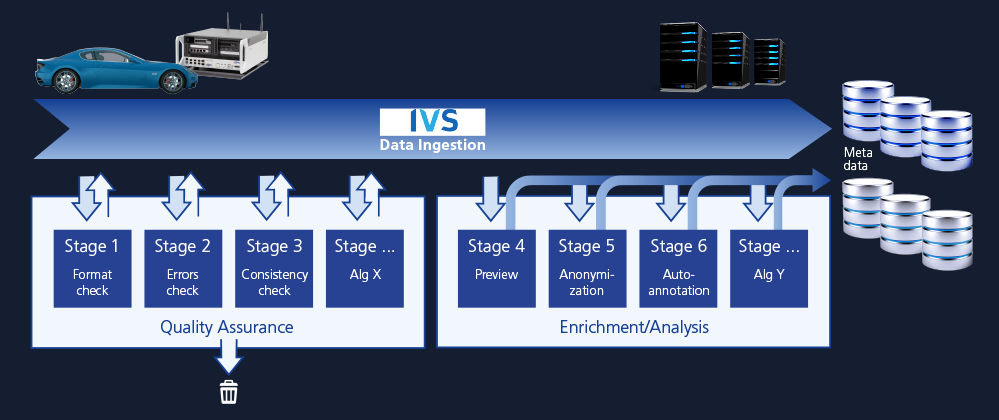

数据摄取管道是数据管道的一部分,可确保数据快速从车辆传输给软件工程师和测试工程师,并保证质量。这是数据管道的第二个阶段,此阶段的目标在于提高效率和质量。即使在记录过程中已经对数据进行了智能过滤,也能够对数据进行进一步的精简。例如精简摄像头数据,因为它特别耗内存。利用更高的计算能力和非实时处理,可以进一步精简数据。除了车辆本身,我们还可以从外部获取更多能源。如果之前未在车辆中对数据进行过滤,那么就会增加摄取中的数据缩减工作。我们需要过滤不需要的数据、损坏的数据或不完整的数据,这样才能提高效率。因为这些数据只会占用空间。如果未在车辆中执行这项质量检查,那么在管道中还有另一个阶段有机会实现这项检查,那就是摄取管道,您可以在这里执行检查从而最大限度地减少所需的存储空间,进而节省成本。质量控制包括格式检查、错误检查和一致性检查等。通过甄别数据的内容,可以进一步精简数据。由此可知,数据处理和某些元信息的提取也是在摄取管道中执行的。它不仅能够进行过滤和数据精简,还能优化搜索过程和数据组织。地图和传感器预览的生成可帮助实现可视化,并加快数据访问的响应速度。我们可以对预览进行自动匿名化处理,以确保符合 GDPR 法规。dSPACE 为数据摄取提供了全面的解决方案。高带宽 AUTERA Upload Station 及其热插拔 AUTERA SSD 可确保快速上传数据。Intempora 的 IVS 集成了本机传输协议以及用于插入定制处理模块以执行数据摄取的框架。默认情况下会包括质量检查、冗余内容精简、预览生成结果和标记模块。dSPACE 集团下属公司 understand.ai 提供的注释服务可帮助您以最高质量快速标记所选数据,即使是海量数据也能轻松驾驭。最后,我们还使用了 UAI Anonymizer,这是一种身份保护匿名处理工具,能够移除超过 99% 的可识别身份的人脸和车牌,并且其设计符合 GDPR、APPI、CSL 和 CCPA 的标准。

测试:安全至上

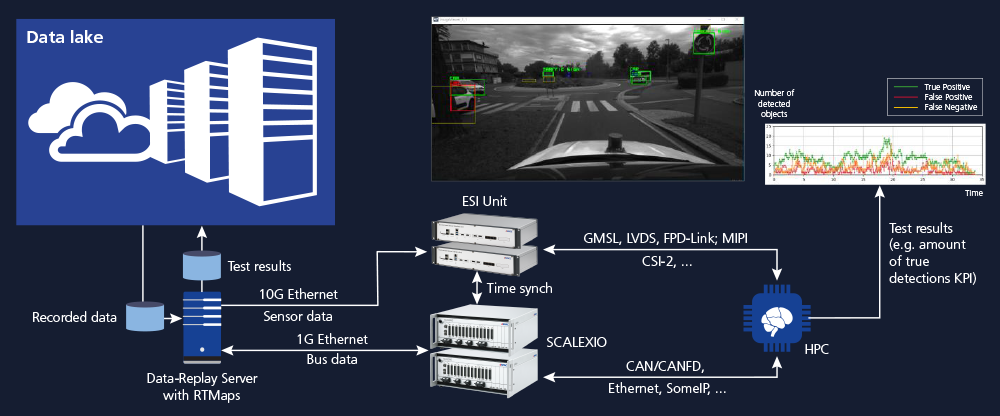

无论是常规驾驶场景还是极端驾驶场景,自动驾驶汽车必须保证安全性。自动化测试发挥着特别重要的作用,因为只有经过全面测试的车辆和车辆软件才能得到用户的信任,确保行驶安全。根据开发阶段的不同,有多种测试方法可供选择。dSPACE 产品提供的解决方案可将这些方法顺利集成并应用到每个平台和新车型的整体测试和认证流程中。数据回放测试(又称再处理)指的是将记录的传感器和总线数据回放到被测系统中,并根据地面实况数据评估其输出,这已成为 ADAS/AD 中的关键测试和验证方法。这种测试方法很高效且具有高性价比,其可以利用真实驾驶场景的数据来分析自动驾驶汽车的行为,这是对车辆进行全面安全评估的关键。数据访问及其同步流是数据回放的关键,帮助其在基于数据驱动开发管道中发挥重要作用。基于这种方法,我们可以在开发过程的早期进行纯软件 (SW) 数据回放测试,也可以在系统集成后的流程后期进行硬件 (HW) 数据回放测试。软件数据回放的运行通常不会受到实时条件影响,与实时形式相比速度更快,能够方便快捷地检查准确性。此外,早在获得硬件原型之前,就可以执行。另一方面,硬件数据回放还包括部署中央计算机或传感器 ECU,我们将连接到测试系统并提供记录的合成数据或真实数据。这样就可以在最接近道路测试的条件下测试硬件、电气接口以及软件。通过注入故障和操作数据流,可以进一步增强数据回放测试。越来越多的云服务用于数据回放测试。dSPACE 及其合作伙伴为基于公共云、内部部署和混合云基础架构的数据回放测试提供出色的解决方案。dSPACE 数据回放产品组合十分广泛,确保符合您的需求,包括用于精确总线和网络接口的模块化实时系统 SCALEXIO、用于传感器接口的强大 FPGA 板卡 Environment Sensor Interface (ESI) 单元、用于早期(虚拟)ECU 仿真的离线仿真器 VEOS,以及最先进的数据分析和传输软件 RTMaps。此外还有 IVS,这是一种数据管理软件,可轻松访问记录的相关数据集。所有这些工具都集成在一起,提供全自动测试解决方案,让您能够全天候测试,实现数千行驶公里与合成公里的驾驶测试。

数据扩充

目前的挑战不仅在于让自动驾驶汽车为已记录数据中的场景做好准备,还在于确保它们能够应对完全没有真实数据的全新未知场景。因为有些极端情况在现实中不可能实现或者因风险过高而无法测试。我们通过传感器真实仿真中人工创建的数据来扩充测试数据集。交通场景和环境可以从头开始创建,也可以通过合成真实的交通情况并操作其参数来创建。扩展测试数据集的第二种方法是使用记录的数据,然后将具有真实行为的合成交通参与者添加到场景中以操作记录的数据。dSPACE 产品现已支持对真实场景进行操作,dSPACE 和 understand.ai 开发的场景生成解决方案发挥了重要作用。通过该解决方案,您可以将真实驾驶测试的记录传送到仿真中,并且使用专用硬件和软件,通过仿真对安全关键型场景和真实驾驶场景进行数千次测试,十分便捷。这些场景可以手动更改和扩展,这在基于场景的测试中有很大帮助,此类测试需要执行大量场景。dSPACE 专为传感器真实仿真打造的 AURELION 解决方案是一款功能强大的全新工具,不仅用于高精度仿真,还可在仿真中操控真实场景。dSPACE 还提供了生成人工训练数据的方法,显著降低了开发成本。

无缝集成

如上所示,dSPACE、其集团下属公司和合作伙伴通过无缝集成的工具支持整个数据管道,确保数据的流畅性。基于 dSPACE 的工具和专业知识,即使在开发项目的瓶颈阶段,您也可以确保数据管道正常运行。