Application Areas

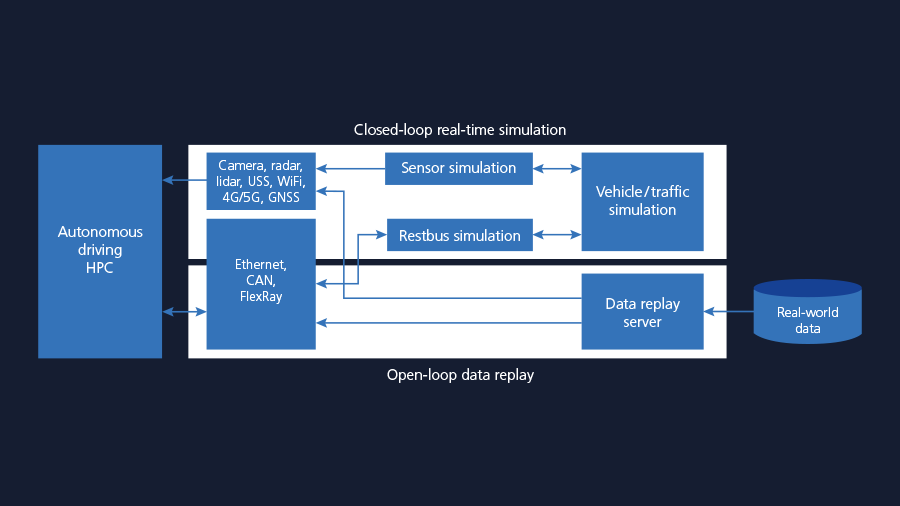

The dSPACE Hardware-in-the-Loop (HIL) test system based on dSPACE’s SCALEXIO technology is a modular and powerful platform for testing autonomous driving HPCs in closed-loop and open-loop simulation. Closed-loop simulation is based on comprehensive models for vehicles, traffic, environment as well as different sensor models, such as camera, radar, lidar, and ultrasonic. Restbus models and interfaces for Automotive Ethernet, CAN, LIN, and FlexRay cover the communication part of the HPC. Failure modes and manipulation options allow for failsafe and security testing. All simulation components run time synchronized in real-time.

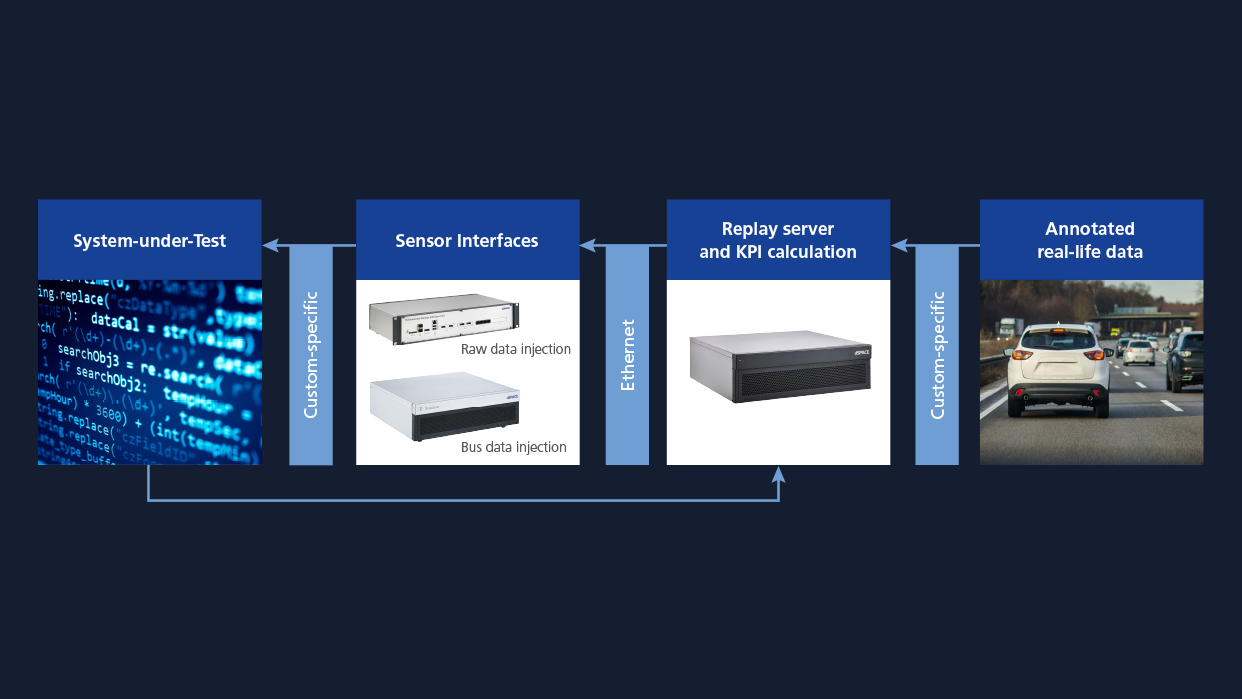

Using the SCALEXIO platform for open-loop simulation allows to replay sensor and bus/network data captured during real test drives. Sensor fusion and perception algorithms can thus be tested with recorded real-world data.

- Comprehensive portfolio for closed-loop and open-loop testing of HPCs for autonomous driving

- Sensor-realistic models for camera, radar, lidar, and ultrasonic sensors

- Comprehensive restbus simulation for Automotive Ethernet, CAN, LIN, and FlexRay

- Closed-loop and open-loop/data replay testing

- Pixel- and point-accurate raw data injection for camera, radar, lidar, and ultrasonic sensors

- Sophisticated vehicle, environment, and traffic models

- Highly precise real-time synchronization

- Efficient utilization of GPU and FPGA technology

- End2End testing through V2X simulation solutions for 4G/5G, WiFi, GNSS

- Scenario-based testing, ISO 26262- and SOTIF-compliant testing

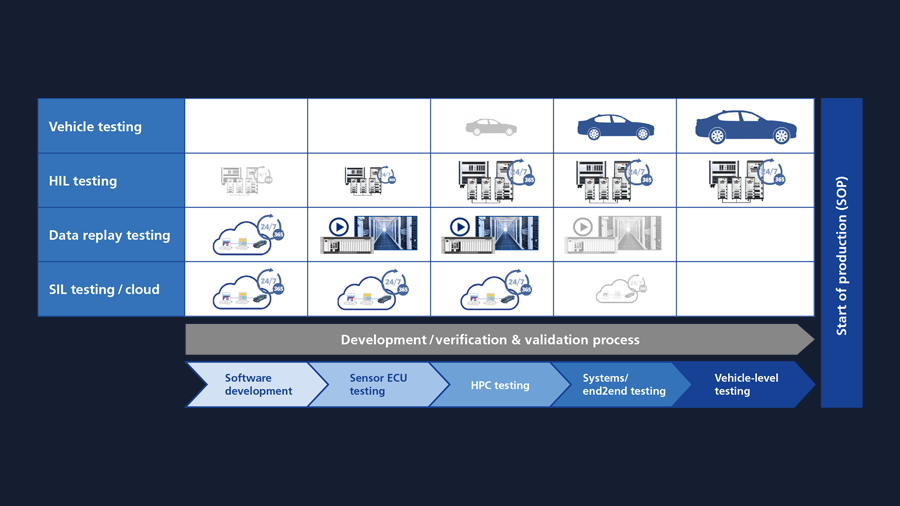

Seamless Testing from SIL and Cloud to HIL

dSPACE tools and solutions for testing autonomous driving HPCs are embedded in an overall validation architecture that allows a maximum re-use of models and software tools between hardware-in-the-loop testing and software-in-the-loop testing as well as cloud-based simulation.

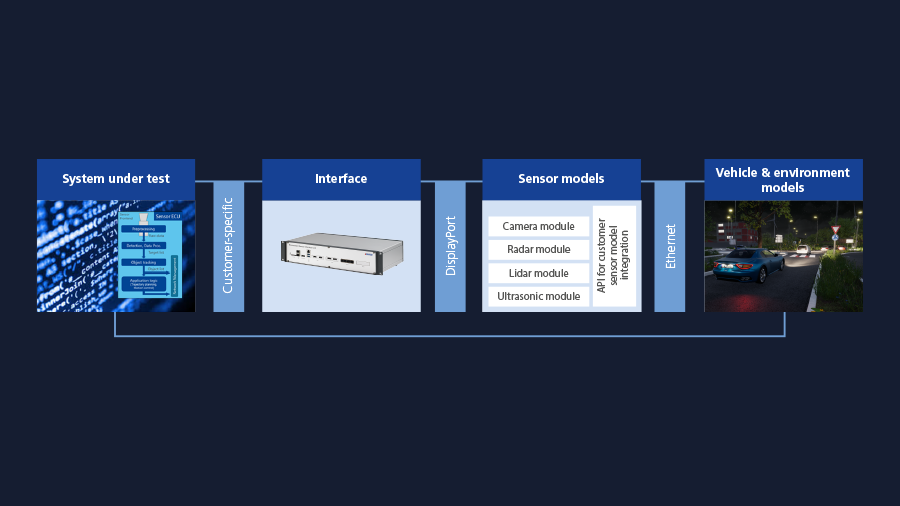

Sensor Simulation

The dSPACE HIL for Autonomous Driving solution offers sensor-realistic simulation for all sensor types, from camera and radar to lidar and ultrasonic sensors. While the sensor models are physics-based, which means that they simulate the real physical effects such as reflective surfaces, the generated sensor raw data is very accurate with respect to simulated camera images or data or lidar point clouds. These sensor models are executed using GPU and FPGA technology, making it possible to run tests even under different weather conditions, such as fog, rain, and snowfall.

- Physics-based, sensor-realistic models using GPU and FPGA technology for highest processing power

- Open for customer specific sensor models through e.g. OSI interface

- Synchronous simulation of multiple sensors for complex AD sensor setups

- Support of multiple serialization/deserialization interfaces of up to 10 Gbit/s, enabled through a large partner network

Your Benefits for Sensor Simulation

- Sensor models validated for the intended use

- Correct models for all automotive applications

- Simulation model complies with the real-time requirements

- Perform model & simulation validation for all parameterizations

- Proven interfacing for integration of tier 1/tier 2 sensor models

- Open interfaces (APIs) for customer-specific sensor models

- Support of standards, e.g., Open Simulation Interface (OSI) or Functional Mock-up Interface (FMI)

- Strong partner network, e.g., Velodyne Lidar, Leddar Tech, Hella, NXP, Cepton

- Realistic environment simulation with dSPACE AURELION

- Sensor-realistic environment simulation for validating perception & driving functions

- Performant interfaces for IP-protected integration of third-party sensor models

- Realistic build of digital twins

Test Strategy

Development of ADAS/AD systems is a multi-stage, iterative process. dSPACE HIL for Autonomous Driving is therefore part of an overall test strategy provided and supported by dSPACE. This test strategy is based on state-of-the-art test methods and provides an answer to the overall “Safety-first”-challenge for automated driving.

The overall test strategy covers all relevant validation methods in ADAS/AD development that allows an effective validation of ADAS/AD systems throughout all phases of development. The test strategy is based on „Safety-first for automated driving“ white paper of the automotive industry.

Along with real test drives and closed-loop HIL testing, data replay is a key test method for validating functions for automated driving. As it provides highest data fidelity and realism, data replay is mainly for testing computer vision as well as AI-based perception and data fusion algorithms which have been trained using real-life data. During replay, data streams that were previously recorded by a high number of sensors like camera, radar, or lidar in real vehicles, are injected into the device under test (DUT). At the same time, the DUT output is measured and compared with reference data (ground truth) to determine the key performance indicators (KPIs), which reflect the maturity level of the perception or data fusion algorithms running on the ECU. By combining a high-performance, real-time-capable SCALEXIO system and an ESI (Environment Sensor Interface) unit, dSPACE offers a flexible system for the precise and deterministic data replay of an unlimited number of raw sensor and bus data streams of any kind for all development stages, including for E2E-security-protected close production ECUs.