AURELION offers high-quality visualization and calculates realistic sensor data to test and validate your perception and driving functions.

What is AURELION?

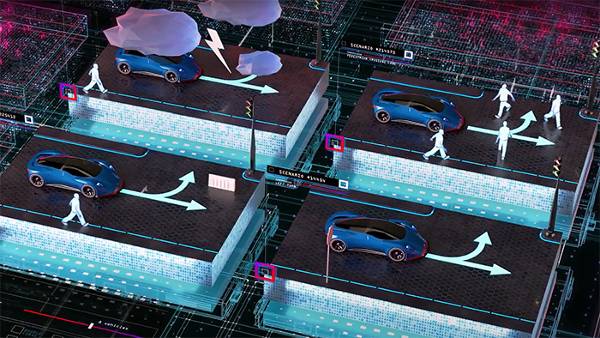

AURELION is a flexible software solution for sensor simulation and visualization. It enables you to integrate realistic sensor data into your processes for developing, testing, and validation of perception and driving functions. The same models can be used throughout various phases of development, for example in hardware-in-the-loop (HIL), software-in-the-loop (SIL), and vehicle-in-the-loop (VIL).

- Highly integrated in dSPACE simulation tools make the integration of AURELION quick and easy

- Our sensor simulation models make sure that you get the highest quality while still meeting your real-time demands

- Open interface to integrate custom sensor models brings the simulation even closer to reality

- Flexible integration of AURELION in third-party simulation brings our sensor simulation into your environment

Application Areas for AURELION

AURELION is suitable for a wide range of use cases and configurations. It can be used throughout various phases of development, for example, in hardware-in-the-loop (HIL) tests, software-in-the-loop (SIL) tests, vehicle-in-the-loop (VIL) tests, and for parallel validation in the cloud.

Examples of application fields:

- Automotive, autonomous vehicles, ADAS

- Agriculture

- Off-highway

- Automated mobile robots

Key Benefits for Sensor Simulation

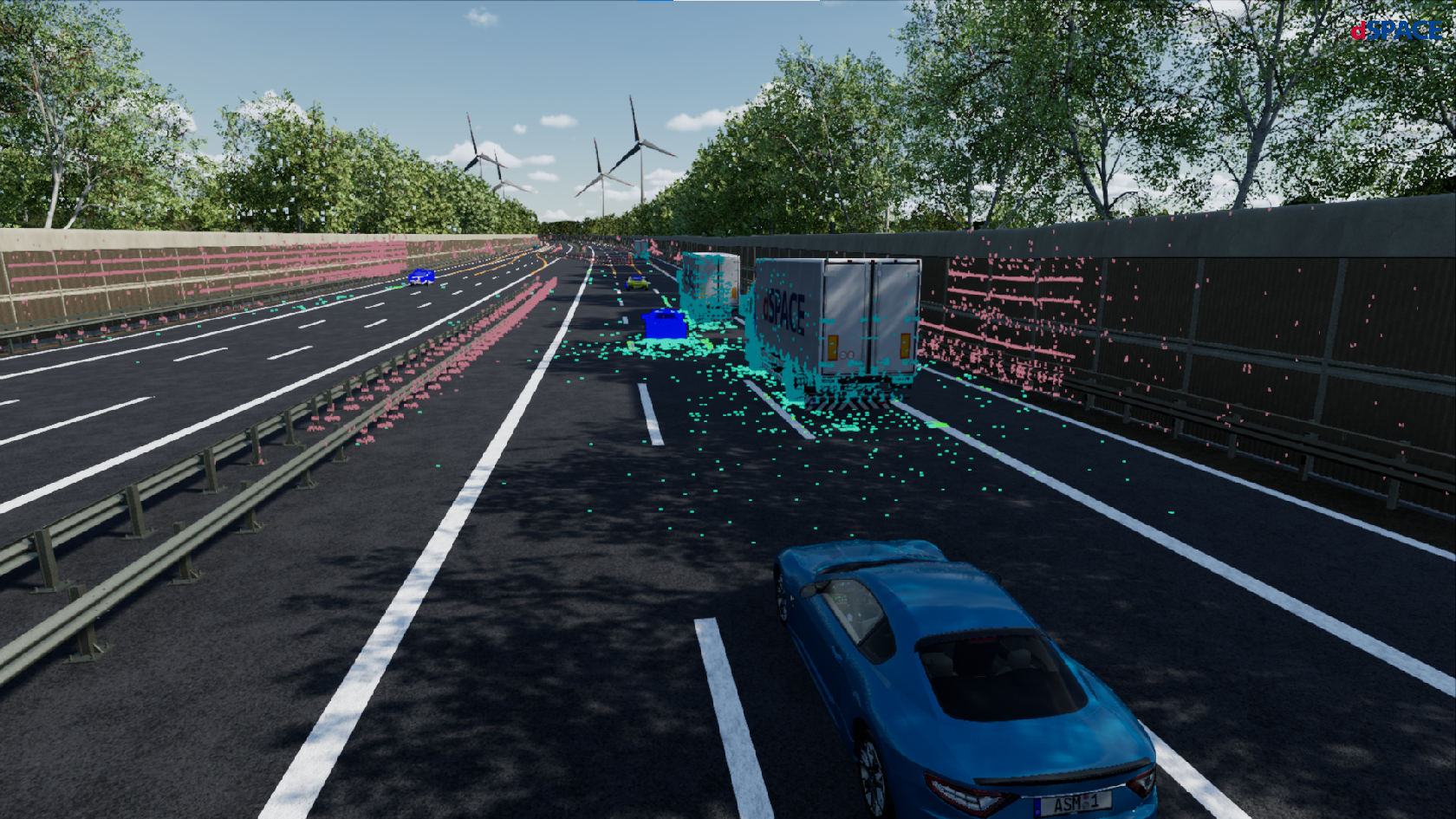

The 3D rendering engine, the high-precision dSPACE simulation models, realistic 3D assets, and high-resolution material information allow for the accurate simulation of automotive sensors and environments under different weather and lighting conditions.

Sensor Models

Camera Sensor Model

Realistically simulate a camera with a rectilinear or fish-eye lens and the corresponding sensor environment.

- High-fidelity graphics, lighting effects, and configurable realistic lens profiles

- Options for image modification and failure injection

- Configurable color filter for outputting raw sensor data

- Use ground truth information such as semantic segmentation, optical flow, and 2D bounding boxes to test and validate your algorithms

Radar Sensor Model

Simulate highly realistic synthetic radar data for all injection layers in real time.

- Channel impulse response, raw data, detection lists, and object lists output available

- Radar-optimized materials and multipath raytracing ensure effects as in real-world measurements (e.g. ghost targets)

- Adaptive ray launching guarantees accurate results and optimal performance even in multi-antenna setups

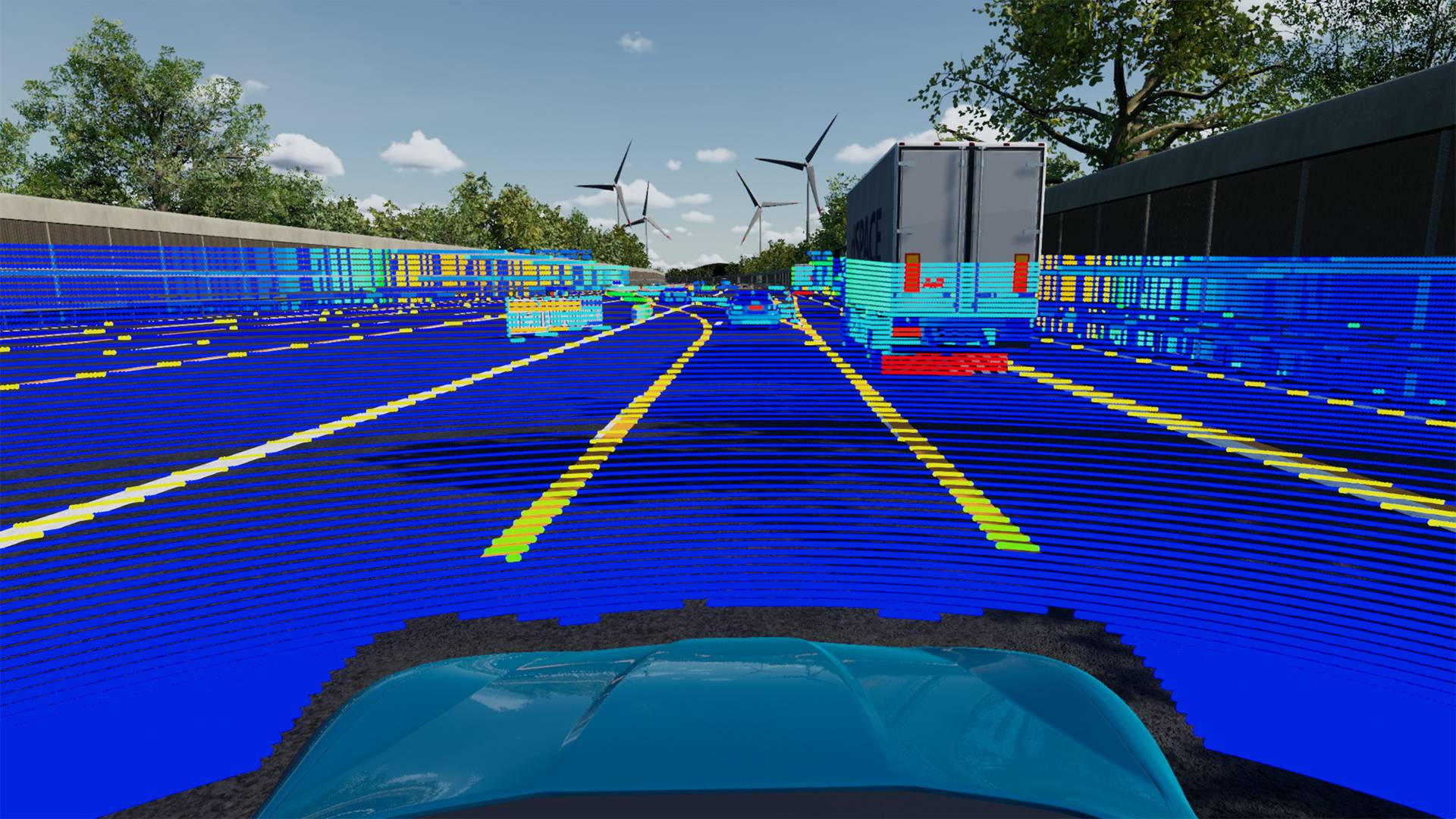

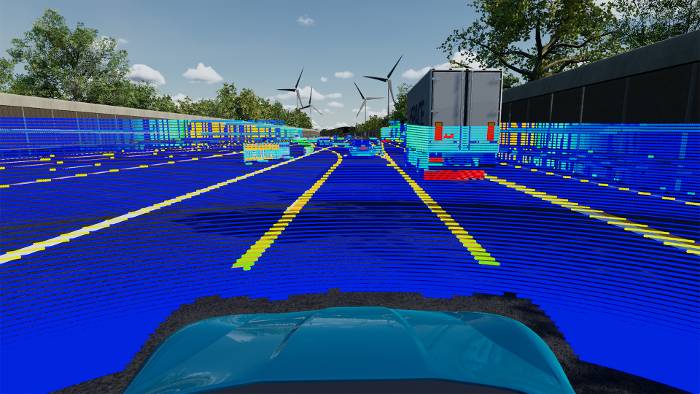

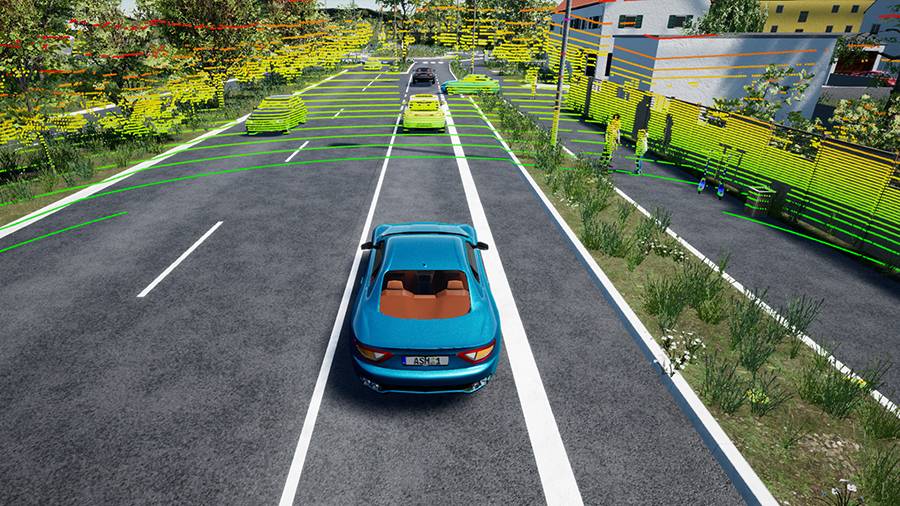

Lidar Sensor Model

Realistically simulate a lidar sensor and the corresponding sensor environment.

- Outputs a point cloud with realistic calculation of reflectivity values based on lidar-specific materials

- Support for scanning and flash-based sensors

- Realistic motion distortion effects with configurable time offsets for each ray

Ground Truth

In order to develop and test computer vision, it is necessary to add ground truth information to sensor data. On the one hand, they are used for automatic tests of perception algorithms and on the other hand, they can also be used to train neuronal networks. Compared to ground truth data from real data, AURELION is generating the different ground truth variants pixel-perfect and for free. For every category, such as pedestrians, cars, and traffic signs.

- Special ground truth output available for camera, radar, and lidar

- The output is automatically parametrized to fit to your sensors

- Synchronized to the raw sensor data ground truth can easily be obtained via a C API

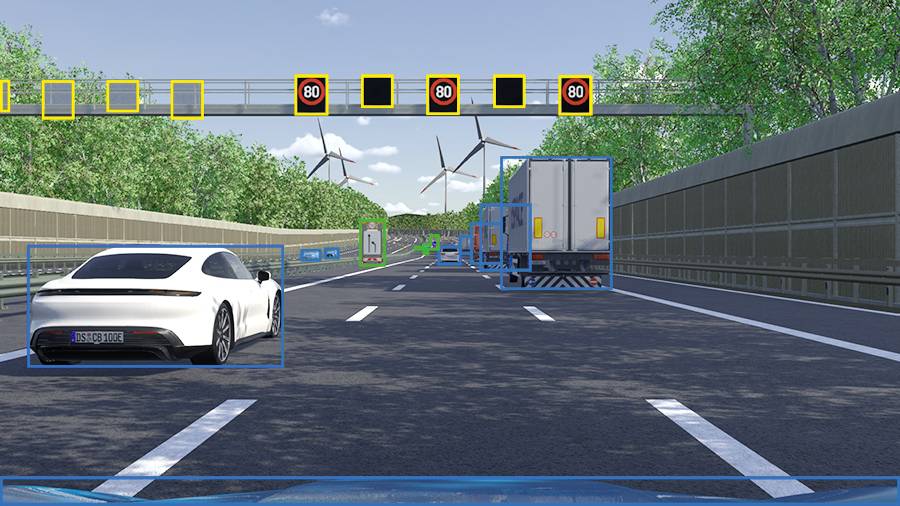

2D Bounding Boxes

2D bounding boxes are two-dimensional boxes that enclose objects in the camera sensor. They can be used to automatically test if your perception algorithm correctly identifies the available objects in your scene. The information is given as binary data so you can use the information for automatic tests in your building pipeline.

- Parameterized for your camera sensor to give out pixel-perfect

bounding boxes - Also includes information about the class and instance of every object

- Filtered for the type of objects you need

- Can be configured to integrate or discard obscured areas

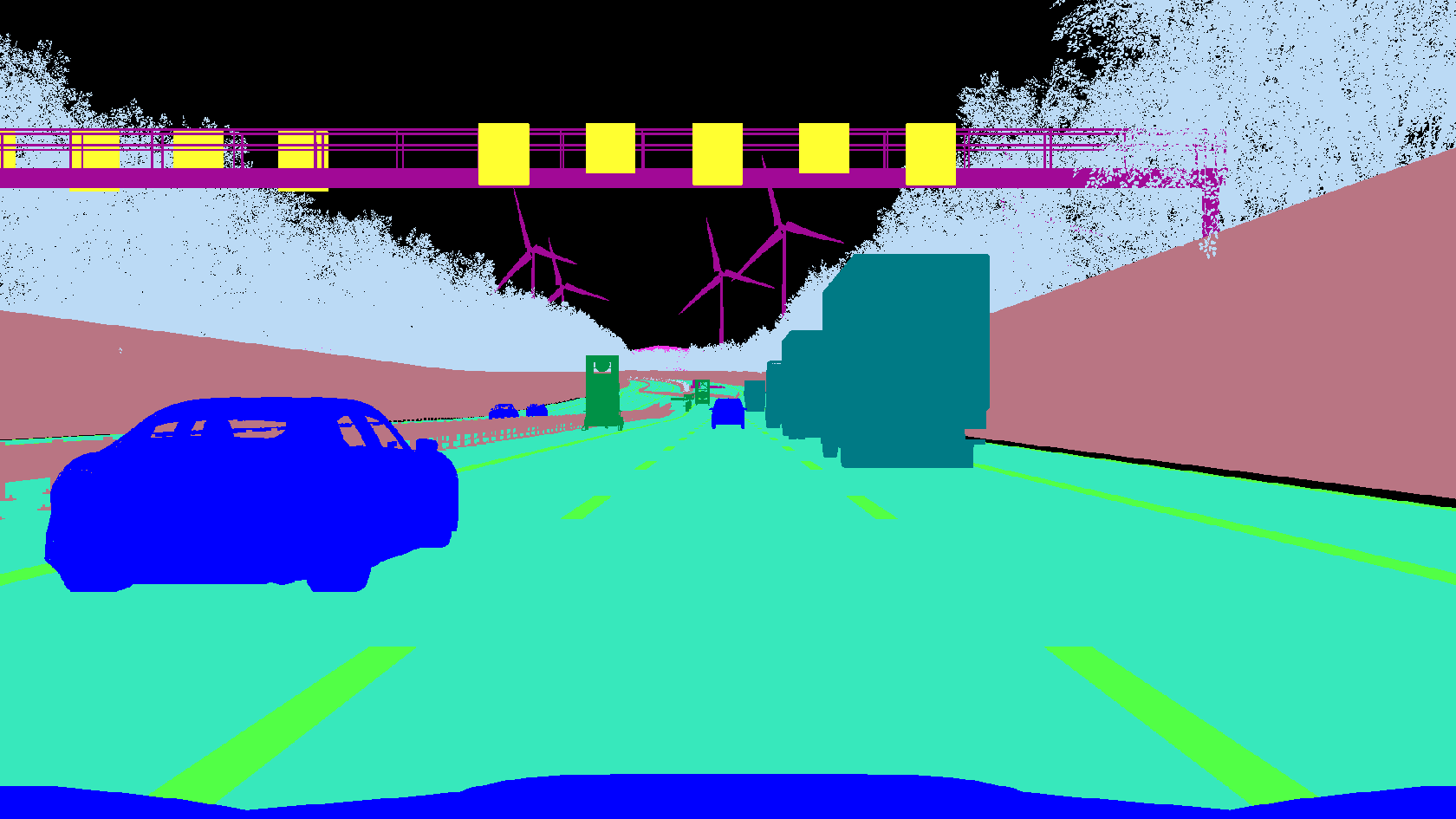

Semantic Segmentation

The semantic segmentation of images included in AURELION provides pixel-level annotations to image regions. For artificial intelligence testing and training, especially for autonomous driving, this information can be used to help neural networks learn which patterns or textures belong to which semantic class of objects.

Subsequently, such networks can be implemented for use cases as diverse as image generation, domain adaptation, lane detection, or drivable area detection.

- Customizable categories

- Same categories across camera, radar, and lidar

- Output category ID and instance ID

Ground Truth for Active Sensors

Ground truth is also available for our active sensors like radar and lidar. This includes e.g. information about the category and instance for each detection of a radar sensor and each point within a point cloud. This information can easily be obtained and used to train neuronal networks.

- Category IDs and instance IDs are identical across every sensor to identify sensors across the sensor data easily

- Different output modes require different kind of ground truth information (obtain them easily via a C API)

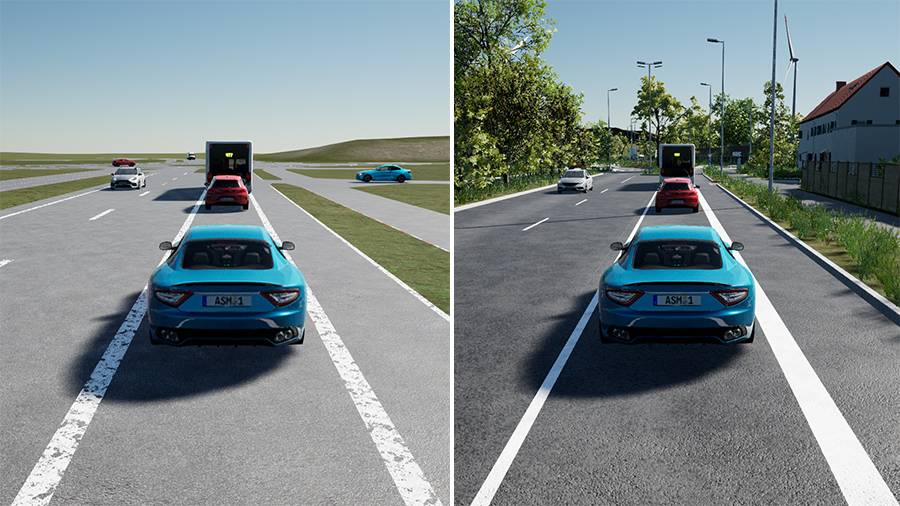

3D Environment

With our software, we offer support for standards like OpenScenario and OpenDrive, allowing us to automatically generate a 3D environment for you. This saves you time and effort, allowing you to focus on what matters most - your simulation.

- We provide tools to let you modify the road and also add 3D assets to the environment

- Roads and terrain are automatically generated based on OpenDrive files

- Our 3D asset library includes sensor specific materials for sensor-realistic simulation

- For a quick start, we provide multiple high-fidelity environments across urban and highway areas

Various Customization Options

We understand that every simulation is unique, and sometimes you need to modify the road or 3D environment to meet your specific needs. That's why our software also provides you with the tools to make those modifications, giving you the flexibility and control to create a customized environment that meets your exact requirements.

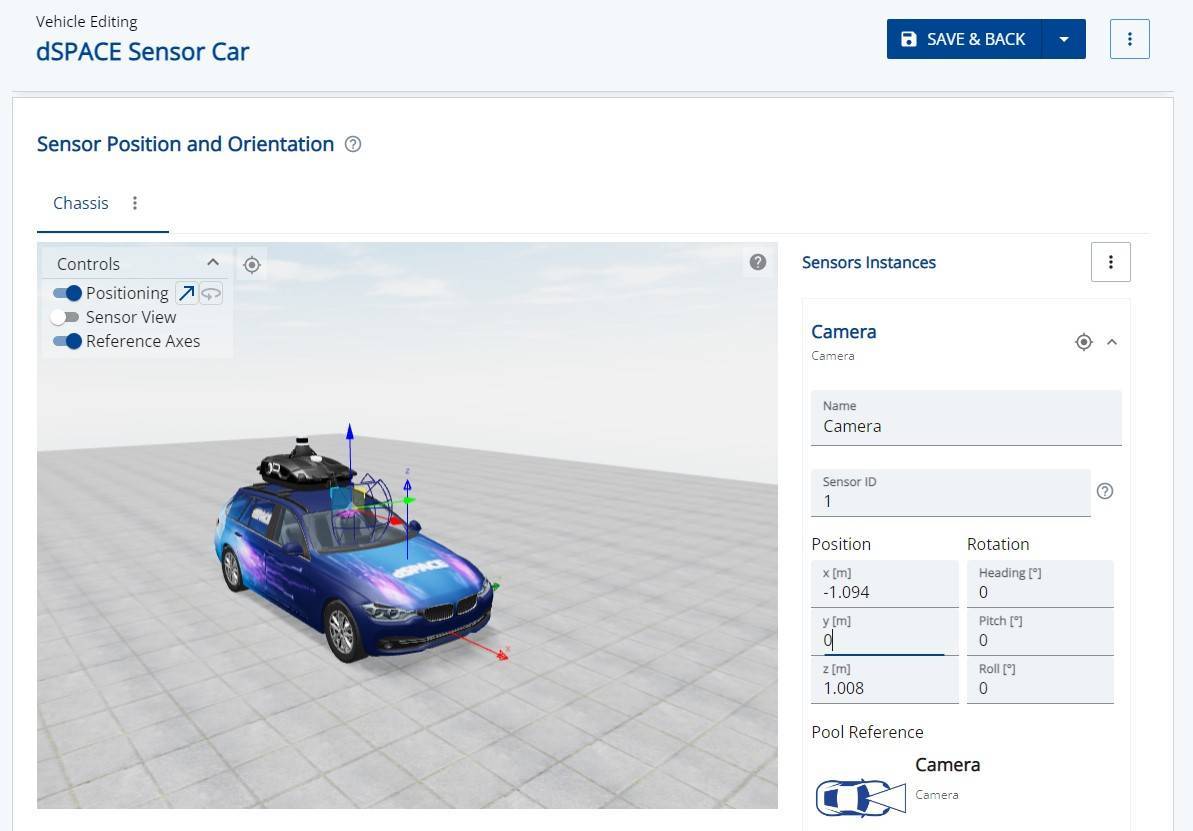

AURELION Manager

With AURELION Manager, you can parametrize your virtual sensors and place them on a 3D representation of your ego car, giving you complete control over your sensor configuration. Our software solution allows you to optimize your AURELION instances and streamline your workflow.

- Import your 3D representation of your car into AURELION

- Visualize your sensor instances on a 3D model of your ego car

- Make accurate decisions about your sensor placement

- Distribute the load by specifying which sensor should be calculated on which PC

- Integrate AURELION into your test environment seamlessly with Automation API

Optional Products

Certified According to ISO 26262

AURELION was certified by TÜV SÜD according to ISO 26262 for safety-relevant systems in motor vehicles. The tool is thus suitable to be used in safety-related development projects according to ISO 26262:2018 for any automotive safety integrity level (ASIL).

This means: Vehicle manufacturers and suppliers can exclude AURELION from the ISO 26262 qualification of their entire processes and can concentrate on proving the functional safety of their own process chains.

Latest Version 24.1

Multiple usability improvements across AURELION and AURELION Manager are introduced with this version to make your processes more comfortable.

- New overlay displays basic vehicle information in AURELION.

- Health state shows the status of the AURELION tool chain.

- AURELION product tour introduces the user interface.

- Added new HESAI AT128 lidar sensor model to the AURELION demos.

- Support of retroreflective object materials for visualization and camera.

... and much more.

Support for AURELION Users

Links to detailed technical information and customer service. Access may require registration.